Data Centers in Space and the AI Power Shift

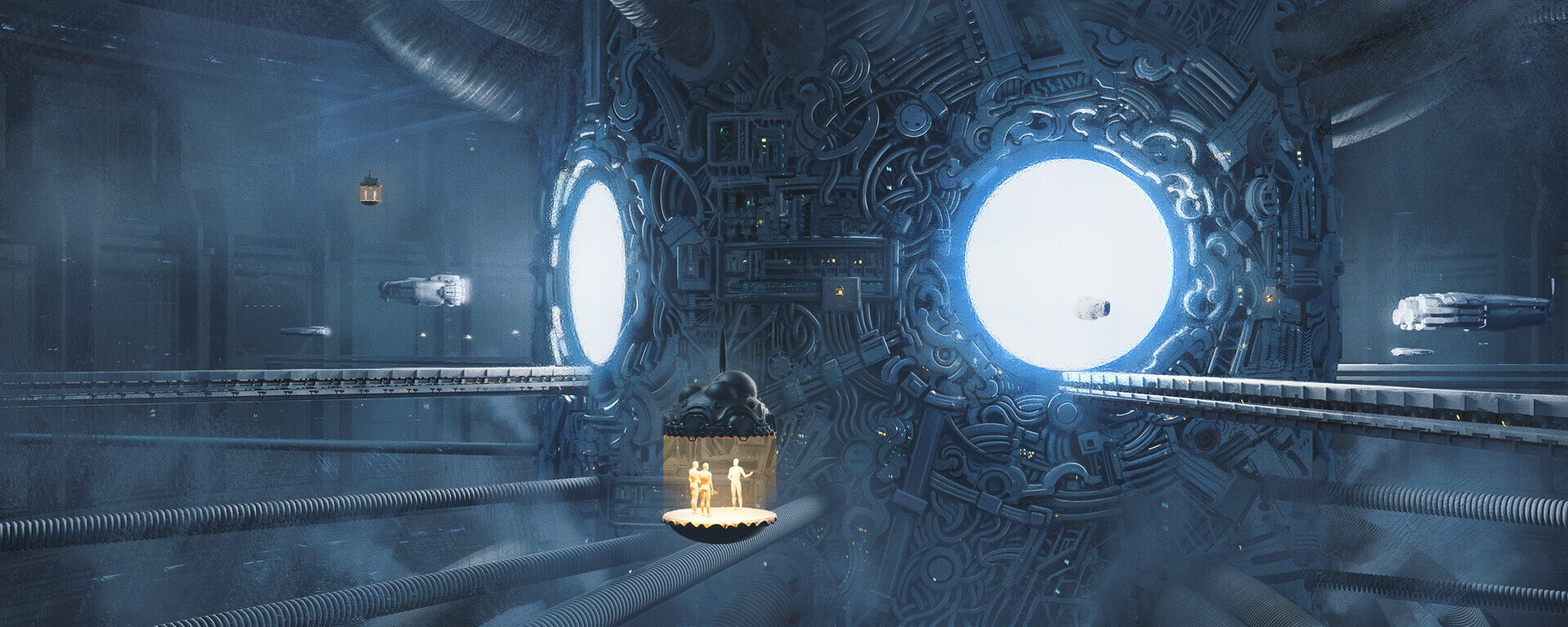

Data Centers in Space are emerging as a provocative solution to the energy and cooling demands of AI computing in outer space. Advocates argue that space-based data centers for AI could leverage continuous solar power and natural vacuum cooling, redefining cloud computing beyond Earth. Yet questions persist: can AI data centers be built in space orbit at scale, and what are the benefits and challenges of space data centers for AI? As orbital data centers feasibility studies expand, the debate intensifies over why data centers in space are impractical today and whether the future of orbital data centers powered by solar energy can overcome sustainability and climate impact concerns.

Data Centers in Space: AI Vision or Reality?

The rapid acceleration of artificial intelligence workloads has transformed global infrastructure priorities. Hyperscale data centers already consume vast quantities of electricity, with AI model training and inference intensifying power density requirements. As terrestrial grids strain under growing digital demand, the concept of Data Centers in Space has shifted from speculative fiction to structured commercial investigation.

The proposal is deceptively simple: relocate high-intensity computing infrastructure into orbit, harness abundant solar energy, and exploit the cold vacuum of space for passive thermal regulation. In theory, space-based data centers for AI could bypass terrestrial land constraints, reduce dependence on fossil-fuel-based grids, and eliminate water-intensive cooling systems. However, the engineering and economic realities remain complex.

The Drivers Behind Space-Based Data Centers for AI

Artificial intelligence systems increasingly rely on high-performance computing clusters equipped with advanced GPUs and specialized accelerators. These systems generate significant heat and demand stable, uninterrupted energy supply. Terrestrial data centers mitigate this through elaborate cooling systems, redundant grid connections, and proximity to renewable energy installations. Despite efficiency gains, energy consumption continues to rise.

The appeal of AI computing in outer space lies in three principal drivers: unlimited solar exposure, natural heat dissipation through radiation, and potential decoupling from climate-sensitive infrastructure. In low Earth orbit, solar panels can generate energy continuously, unaffected by atmospheric interference. Without atmospheric convection, heat disperses via radiative cooling, theoretically reducing mechanical cooling complexity.

These advantages underpin the growing discourse around space data center infrastructure. Yet feasibility extends far beyond theoretical thermodynamics.

Orbital Data Centers Feasibility: Technical Realities

Orbital data centers feasibility hinges on launch economics, radiation shielding, maintenance logistics, and data latency. The cost of transporting heavy computing hardware into orbit remains substantial, even with reusable launch vehicles reducing per-kilogram expenses. A fully operational AI data center module would require structural reinforcement, radiation-hardened electronics, and autonomous repair capabilities.

Radiation exposure presents a nontrivial challenge. High-energy particles in space can degrade semiconductor components and cause data corruption. Shielding solutions add mass, increasing launch costs. Alternatively, hardened chip designs raise manufacturing complexity and expense.

Maintenance further complicates operational models. Terrestrial facilities benefit from rapid hardware replacement and on-site technicians. In orbit, robotic servicing or crewed missions would be required, dramatically altering cost structures. The economic viability of space-based data centers for AI therefore depends not only on technological feasibility but also on lifecycle cost modeling.

Latency introduces additional constraints. Cloud computing beyond Earth requires robust high-bandwidth communication links between orbital platforms and terrestrial networks. Although satellite communication networks continue to improve, transmitting large AI datasets with minimal delay remains a challenge for latency-sensitive applications.

Can AI Data Centers Be Built in Space Orbit?

From an engineering standpoint, the answer is conditional rather than absolute. Modular space stations already support complex scientific operations. Power generation via photovoltaic arrays is well established. Thermal regulation through radiators is routine in spacecraft design. Therefore, foundational technologies exist.

However, scaling these technologies to support hyperscale AI workloads demands significant advancement. High-density racks, petabit networking, and fault-tolerant storage systems would need redesign for microgravity environments. Structural integrity under orbital debris risk must also be considered. Micrometeoroids and space debris pose collision hazards capable of catastrophic damage.

Autonomous systems would be essential. Machine learning-driven diagnostics and robotic manipulation systems would replace conventional IT service models. AI computing in outer space may ironically depend on AI for its own survival and operational continuity.

Benefits and Challenges of Space Data Centers for AI

The benefits and challenges of space data centers for AI must be assessed through an integrated energy, economic, and environmental framework.

Potential benefits include reduced reliance on terrestrial fossil-fuel grids, minimized land use conflicts, and decreased freshwater consumption for cooling. Space-based solar energy offers uninterrupted generation cycles, avoiding intermittency challenges associated with wind or ground-based solar installations. Over the long term, such infrastructure could mitigate climate impact by relocating energy-intensive computing beyond densely populated ecosystems.

Challenges, however, are formidable. Launch emissions contribute to atmospheric pollution. Manufacturing radiation-resistant components increases resource intensity. Debris accumulation exacerbates orbital congestion risks. Additionally, the capital expenditure required for deployment may limit participation to major technology conglomerates or state-backed enterprises.

Thus, while space data center sustainability and climate impact narratives appear promising, comprehensive lifecycle analysis is required to determine whether emissions saved in operation outweigh those generated during deployment.

Why Data Centers in Space Are Impractical Today

Despite accelerating interest, several factors explain why data centers in space are impractical today. First, terrestrial renewable integration is expanding rapidly. Large-scale solar, wind, hydroelectric, and geothermal projects continue to decarbonize grid infrastructure. Relocating computing to orbit may not offer immediate cost advantages compared to optimizing Earth-based facilities powered by renewables.

Second, launch capacity remains constrained relative to projected AI hardware demand. Even with improved rocket reusability, transporting thousands of tons of computing equipment annually would require unprecedented scale.

Third, cybersecurity and governance frameworks are underdeveloped. Jurisdictional authority over orbital computing assets remains ambiguous under international space law. Data sovereignty concerns could complicate commercial adoption.

Finally, risk concentration must be considered. A single catastrophic orbital event could disable significant computational capacity, creating systemic digital disruptions.

The Future of Orbital Data Centers: Solar Power and Cooling

Looking ahead, the future of orbital data centers solar power and cooling models may depend on advances in three domains: launch cost reduction, autonomous robotics, and modular design. If launch costs decline by an order of magnitude, economic equations shift dramatically. Autonomous in-orbit assembly using robotic systems could enable large-scale infrastructure without constant human presence.

Innovations in lightweight composite materials and radiation-hardened processors may further reduce mass and enhance durability. In such a scenario, space-based data centers for AI could function as specialized computational hubs for energy-intensive model training, while latency-sensitive inference workloads remain Earth-bound.

Hybrid architectures may emerge. Space platforms could handle batch processing and large-scale AI model training powered by continuous solar input. Terrestrial facilities would manage real-time applications and regional redundancy. Cloud computing beyond Earth would then represent an extension rather than replacement of current infrastructure.

Commercial Viability and Strategic Positioning

Commercial interest in Data Centers in Space reflects broader strategic competition in the technology sector. Control over next-generation computing infrastructure equates to influence in AI development, defense analytics, and global cloud markets. Governments and private enterprises may pursue orbital infrastructure not solely for efficiency but for strategic resilience.

Insurance markets, regulatory frameworks, and public-private partnerships will shape adoption trajectories. If orbital infrastructure becomes integrated into national security architectures, funding streams could accelerate development irrespective of immediate profitability.

Nevertheless, large-scale commercial viability requires predictable returns. Investors demand clarity on depreciation cycles, operational reliability, and market demand. Until these metrics stabilize, orbital data centers feasibility assessments will remain exploratory rather than definitive.

Sustainability and Climate Impact Assessment

The narrative of space data center sustainability and climate impact remains nuanced. On one hand, relocating high-energy AI workloads to orbit could reduce terrestrial carbon emissions if powered entirely by solar arrays. On the other hand, rocket launches emit black carbon and other pollutants at high altitudes, with uncertain atmospheric effects.

A comprehensive assessment must account for manufacturing emissions, launch frequency, orbital maintenance, and end-of-life deorbiting processes. Without responsible decommissioning strategies, orbital debris proliferation could undermine environmental benefits.

Thus, sustainability claims must be validated through transparent carbon accounting frameworks rather than speculative projections.

Conclusion: Vision, Strategy, or Speculation?

Data Centers in Space represent a convergence of technological ambition and environmental urgency. The concept addresses legitimate concerns surrounding AI energy demand and terrestrial resource constraints. Yet practical implementation faces engineering, economic, regulatory, and environmental barriers.

The question is not whether AI data centers can be built in space orbit in isolated demonstrations. Rather, the central inquiry concerns scalable, commercially viable deployment capable of competing with rapidly improving Earth-based infrastructure.

For now, Data Centers in Space remain at the frontier of possibility—neither pure hallucination nor immediate inevitability. As AI workloads expand and sustainability pressures intensify, orbital infrastructure may evolve from conceptual exploration to strategic reality. Until then, the debate over feasibility, practicality, and long-term impact will continue to shape the future of AI computing in outer space and the broader evolution of cloud computing beyond Earth.

English

English