Google Cloud AI Frontiers: The Three AI Shifts

Google Cloud AI Frontiers represents a strategic shift in enterprise artificial intelligence, centering on three frontiers AI models that balance intelligence, response time, and cost scalability. As organizations confront enterprise AI deployment challenges and frontiers, the Google Cloud AI model strategy emphasizes optimization across raw intelligence vs response time vs cost scalability in AI models. Through the Google Vertex AI framework for real-world AI applications and advanced Vertex AI enterprise capabilities, the platform positions itself at the intersection of performance, economics, and production readiness.

Google Cloud AI Frontiers: Redefining Enterprise Model Capability

The competitive landscape of artificial intelligence has entered a new phase. Model size alone no longer defines leadership. Instead, enterprise buyers evaluate AI platforms across three decisive dimensions: capability, speed, and scalability. Google Cloud AI Frontiers encapsulates this transition by formalizing a framework around what industry analysts describe as the three frontiers AI models must address to remain viable in production environments.

At the center of this evolution is Google Cloud, which has articulated a structured approach to AI deployment that aligns research breakthroughs with commercial execution. The conversation is no longer about experimental demos. It is about infrastructure-grade reliability, predictable cost structures, and measurable performance gains.

Understanding the Three Frontiers AI Models Must Address

The first frontier concerns raw intelligence. This dimension measures reasoning depth, contextual understanding, multimodal processing, and domain specialization. Organizations deploying large language models increasingly demand systems capable of complex decision-support, not merely conversational fluency. Intelligence in this context reflects model architecture, training data breadth, and evaluation benchmarks.

The second frontier is response time. Even the most advanced AI system loses commercial value if latency disrupts user workflows. In enterprise environments such as financial services, healthcare analytics, or supply chain optimization, sub-second responsiveness determines adoption viability. AI model intelligence speed cost scalability becomes a balancing equation rather than an isolated performance metric.

The third frontier focuses on cost scalability. Scaling from pilot projects to global deployments introduces significant infrastructure demands. Without cost-efficient inference pipelines and resource orchestration, advanced AI becomes economically unsustainable. This is where Google Cloud AI model strategy differentiates itself by integrating optimization at both hardware and orchestration layers.

Google Cloud AI Model Strategy: Intelligence Meets Infrastructure

The Google Cloud AI model strategy aligns advanced research models with cloud-native architecture. Instead of treating AI models as standalone artifacts, the platform embeds them within a broader ecosystem of APIs, orchestration services, and security controls.

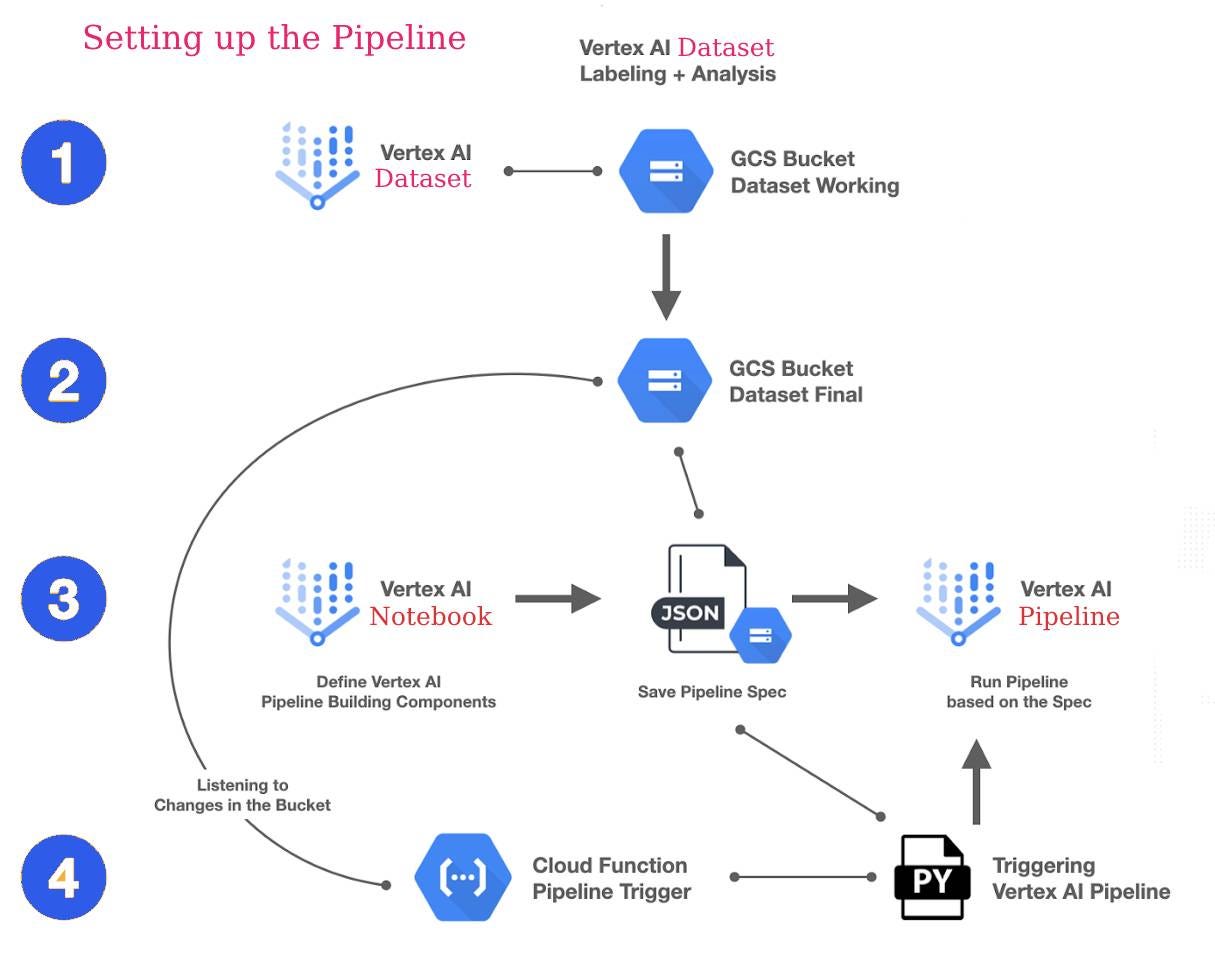

Through Vertex AI, enterprises gain access to model customization, deployment pipelines, and lifecycle management tools that reduce friction between experimentation and production. Vertex AI enterprise capabilities extend beyond training and inference. They include governance controls, dataset management, evaluation frameworks, and monitoring dashboards. This integrated approach addresses enterprise AI deployment challenges and frontiers that frequently derail adoption. Data residency requirements, compliance mandates, and cross-regional scaling constraints demand infrastructure-level solutions rather than isolated AI endpoints.

Raw Intelligence vs Response Time vs Cost Scalability in AI Models

A recurring tension in artificial intelligence engineering involves trade-offs. Larger models typically exhibit higher reasoning performance but introduce heavier compute demands. Smaller models may deliver speed advantages yet compromise contextual depth. The equation becomes particularly complex when enterprises attempt to globalize deployments.

Google Cloud AI Frontiers reframes this dilemma by encouraging optimization across all three axes simultaneously. The architecture leverages distributed processing, hardware acceleration, and workload-aware scaling policies. Rather than maximizing one metric at the expense of others, the strategy seeks equilibrium. In financial modeling scenarios, for example, high intelligence may be necessary for risk analysis, while real-time responsiveness is essential for trading environments. Meanwhile, global scale requires predictable per-query cost structures. Aligning these demands is central to long-term viability.

The Google Vertex AI Framework for Real-World AI Applications

The Google Vertex AI framework for real-world AI applications integrates experimentation, tuning, deployment, and monitoring into a unified workflow. Production AI systems must evolve continuously, adapting to new datasets, compliance standards, and usage patterns.

Vertex AI enterprise capabilities enable model versioning, automated retraining, and continuous evaluation pipelines. These mechanisms ensure that intelligence improvements do not degrade response performance or inflate operational expenditure. The framework embeds observability metrics that allow enterprises to quantify AI model intelligence speed cost scalability in practical terms. Crucially, the system supports hybrid and multi-cloud configurations, recognizing that enterprise AI rarely operates within a single infrastructure boundary. This flexibility reduces vendor lock-in risks while enhancing geographic redundancy.

Enterprise AI Deployment Challenges and Frontiers

Despite rapid progress in generative AI, enterprise adoption remains uneven. Deployment complexity, governance uncertainty, and integration friction represent persistent obstacles. Many organizations struggle with data silos that inhibit model accuracy. Others encounter compliance issues when scaling across jurisdictions.

Google Cloud AI Frontiers addresses these enterprise AI deployment challenges and frontiers by aligning AI services with established cloud security standards. Identity management, encryption controls, and audit trails become integral to model operations rather than afterthoughts. Moreover, cost forecasting tools provide transparency into inference expenditure. This transparency is critical for CFO-level decision-making. AI investments must demonstrate measurable ROI, not speculative innovation value.

Performance Economics: The Commercial Investigation

From a commercial perspective, Google Cloud AI Frontiers positions AI not merely as a technological capability but as an economic lever. The total cost of ownership for AI systems extends beyond compute usage. It encompasses model fine-tuning cycles, maintenance overhead, monitoring infrastructure, and compliance adaptation.

By embedding optimization at the orchestration level, the Google Cloud AI model strategy reduces redundant compute consumption. Elastic scaling policies allocate resources dynamically based on demand. This elasticity directly impacts margin sustainability for enterprises integrating AI into customer-facing products.

The commercial investigation therefore centers on efficiency ratios. Intelligence improvements must correlate with revenue generation or cost reduction. Response time enhancements must translate into user retention gains. Scalability efficiencies must support geographic expansion without exponential infrastructure expense.

Competitive Positioning in the AI Cloud Market

Within the broader cloud ecosystem, Google Cloud AI Frontiers competes in a landscape defined by rapid iteration cycles. Cloud providers increasingly emphasize AI as a core differentiator. However, the differentiation no longer rests solely on model benchmarks.

The strategic narrative revolves around production readiness. Enterprises seek platforms capable of orchestrating thousands of concurrent AI workflows without degradation. Reliability metrics, uptime guarantees, and compliance certifications weigh heavily in procurement evaluations. Google Cloud’s emphasis on three frontiers AI models reinforces a narrative of balanced optimization. Instead of highlighting only model scale, the messaging integrates intelligence, speed, and cost scalability as co-equal pillars.

The Strategic Implications for Enterprise Leaders

For CTOs and CIOs, Google Cloud AI Frontiers introduces a structured lens for AI investment evaluation. Decision-making shifts from experimentation enthusiasm toward infrastructure calculus. Model selection must align with workload characteristics, latency tolerances, and budget constraints.

The framework encourages scenario-based evaluation. Customer support automation, predictive maintenance analytics, and financial forecasting each impose distinct performance requirements. Aligning raw intelligence vs response time vs cost scalability in AI models becomes a context-specific exercise. This structured perspective reduces implementation risk. Rather than over-provisioning compute resources or underestimating latency demands, enterprises can calibrate AI systems with precision.

Long-Term Outlook: Beyond Model Size

Artificial intelligence innovation has historically followed a trajectory of scale escalation. Larger datasets and deeper architectures have driven benchmark improvements. Yet the maturation of enterprise AI demands multidimensional optimization.

Google Cloud AI Frontiers reflects a recognition that sustainable leadership requires operational excellence. Intelligence gains must coexist with rapid response pipelines and economically viable scaling mechanisms. The Google Vertex AI framework for real-world AI applications operationalizes this philosophy by embedding governance, monitoring, and lifecycle management into the AI stack. The next wave of AI competition will likely focus on efficiency ratios rather than parameter counts. Enterprises will prioritize vendors capable of demonstrating measurable performance gains without disproportionate infrastructure costs.

Conclusion: A Structural Shift in AI Evaluation

Google Cloud AI Frontiers signals a structural evolution in artificial intelligence strategy. The focus on three frontiers AI models—intelligence, speed, and scalability—introduces a disciplined framework for enterprise adoption. By integrating Vertex AI enterprise capabilities within a broader Google Cloud AI model strategy, the platform addresses enterprise AI deployment challenges and frontiers with infrastructure-level precision.

In an environment defined by rapid innovation and intense competition, balanced optimization emerges as the defining metric. Intelligence alone no longer guarantees leadership. Response time and cost scalability determine whether AI transitions from experimental promise to sustained commercial advantage. As organizations refine AI procurement strategies, Google Cloud AI Frontiers stands as a benchmark for multidimensional evaluation, aligning technological advancement with operational and economic realities.

English

English